Tip #499: What is Pixel Aspect Ratio?

… for Codecs & Media

Tip #499: What is Pixel Aspect Ratio?

Larry Jordan – LarryJordan.com

Pixel aspect ratios were used in the past to compensate for limited bandwidth.

Pixel aspect ratios determine the rectangular shape of a video pixel. In the early days of digital video, bandwidth, storage and resolution were all very limited. Also, in those days, almost all digital video was displayed on a 4:3 aspect ratio screen.

This meant that the image was 4 units wide by 3 units high, composed of 720 pixels across and 480 pixels high. (The reason I use the word “units” was that then, like now, monitors came in different sizes, but all had the same resolution regardless of size.)

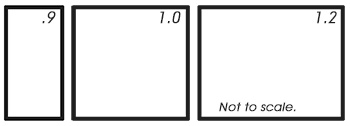

However, standard definition video, though displayed as a 4×3 images, was composed of 720 pixels horizontally by 480 pixels vertically. This was not 4×3. To get everything to work out properly, instead of being square, each pixel was tall and thin. Each pixel was 0.9 units wide to 1.0 unit tall. (The screen shot shows an exaggerated example of this difference in width.)

As digital video started to encompass wide screen, rather than add more pixels, which was technically challenging, engineers changed the shape of the pixel to be fat. (A pixel aspect ratio of 1.0×1.2) This provided wide screen support (16×9 aspect ratio images) without increasing pixel resolution or, more importantly, file size and bandwidth requirements.

These non-square pixels continued for a while into HD video, with both HDV and some formats of P2 using non-square pixels.

However, as storage capacity and bandwidth caught up with the need for more pixels in larger frame sizes, pixels evolved into the square pixels virtually every digital format uses today. This greatly simplified all manner of pixel manipulation.

However, most compression software has settings that allow it to work with legacy formats back in the days when pixels weren’t square.

Leave a Reply

Want to join the discussion?Feel free to contribute!